CHOM5KY vs CHOMSKY

World Premiere November 4 – 20, 2022

Artificial intelligence is omnipresent: from image editing programs in smartphones to self-parking cars and a virtual assistant in the kitchen. The attempt to transfer human learning and thinking to computers and give them intelligence is a prominent phenomenon of our time. But what exactly is AI?

Get your Ticket

Part of the Berlin Science Week 2022

In November 2022, the German-Canadian co-production CHOM5KY vs CHOMSKY will celebrate its world premiere in Berlin. When machine intelligence is praised as an inevitable and necessary technology of the present and future, one should ask: What are we hoping for? And what price are we willing to pay?

If we know so little about the human mind, what exactly are we trying to replicate with AI? To what end? And what are we leaving behind?

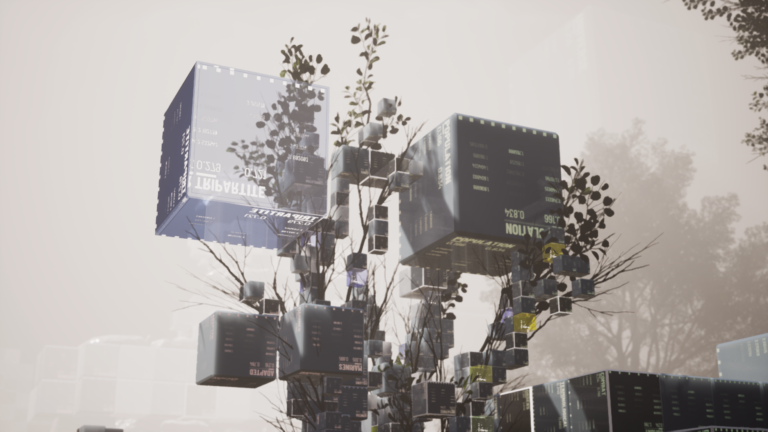

CHOM5KY vs CHOMSKY is a mixed reality installation that invites visitors to have a thought-provoking encounter with Artificial Intelligence, guided by a virtual host inspired and built on the digital traces of renowned linguist Noam Chomsky. Putting the user front and centre, CHOM5KY serves as a mirror, reflecting back what people discover about themselves during the experience.

This interactive, multi-user, playful and collaborative experience allows users to examine the promises and pitfalls of AI in a fun and engaging way, while posing the questions of what we’re hoping to achieve, to recreate and at what cost.

Various activities are designed to highlight three essential traits of our human intelligence: creativity, inquiry and collaboration. Through these different forms of play, visitors learn about the limitations and possibilities of machines and in particular, they learn about each other as human beings.

More than glue

You might ask, why does this appear in this blog? Good question! From a technological point of view we have 3 main components: rendering, backend and logic. While the rendering is done in the unreal engine and the backend runs on webservers, we used vvvv to orchestrate these systems. Also the human language interface (speech to text and text to speech) is done in vvvv. That workflow helped us to keep development cycles for logical parts short and test early.

Credits

CHOM5KY vs CHOMSKY created by Sandra Rodriguez. A co-production of the National Film Board of Canada and SCHNELLE BUNTE BILDER, with support from Medienboard Berlin-Brandenburg.

Design, Code and Scenography:

Sebastian Huber

Johannes Lemke

Max Seeger

Johannes Timpernagel

Felix Worseck

Michael Burk

Back-End Architect

Cindy Sherman Bishop

AI Lead

Moov AI

Composition and Immersive Audio

kling klang klong

Producer

Uschi Feldges

Carl-Johannes Schulze

Further questions?

Get in touch chomsky@schnellebuntebilder.de Further Info

Comments: